Confidential Containers pattern

Validation status:

Links:

About the Confidential Containers pattern

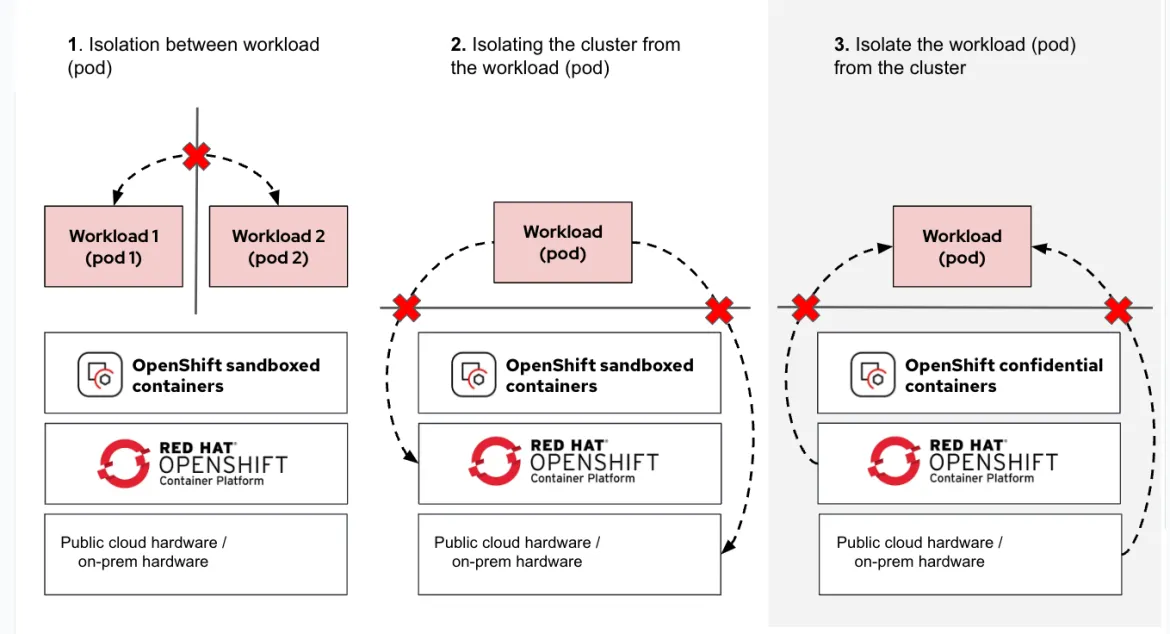

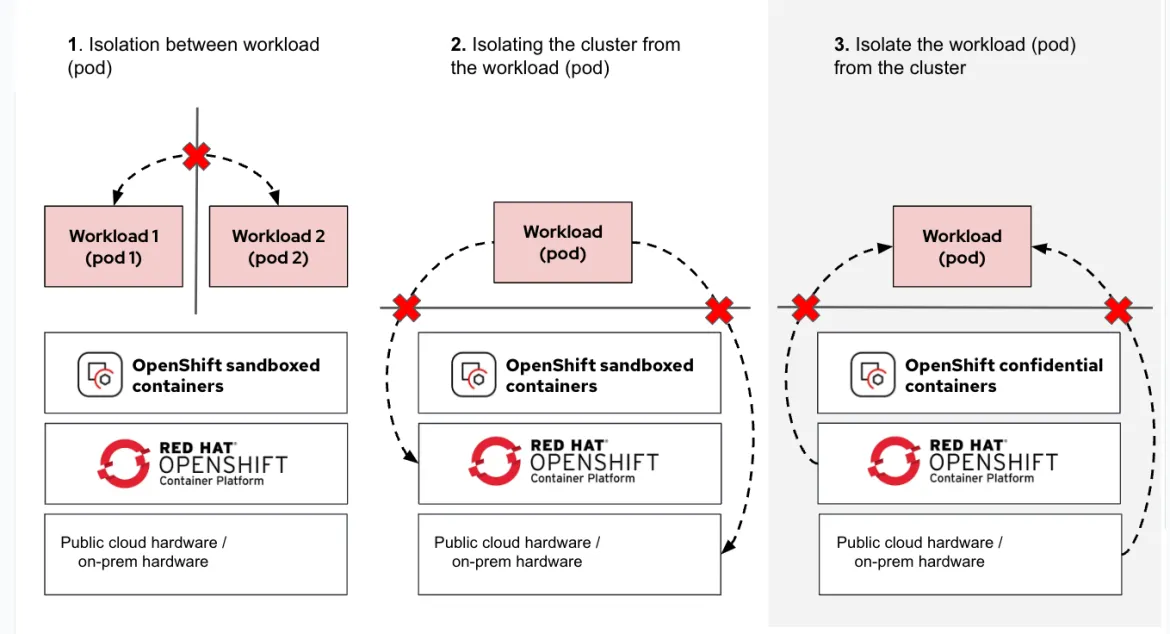

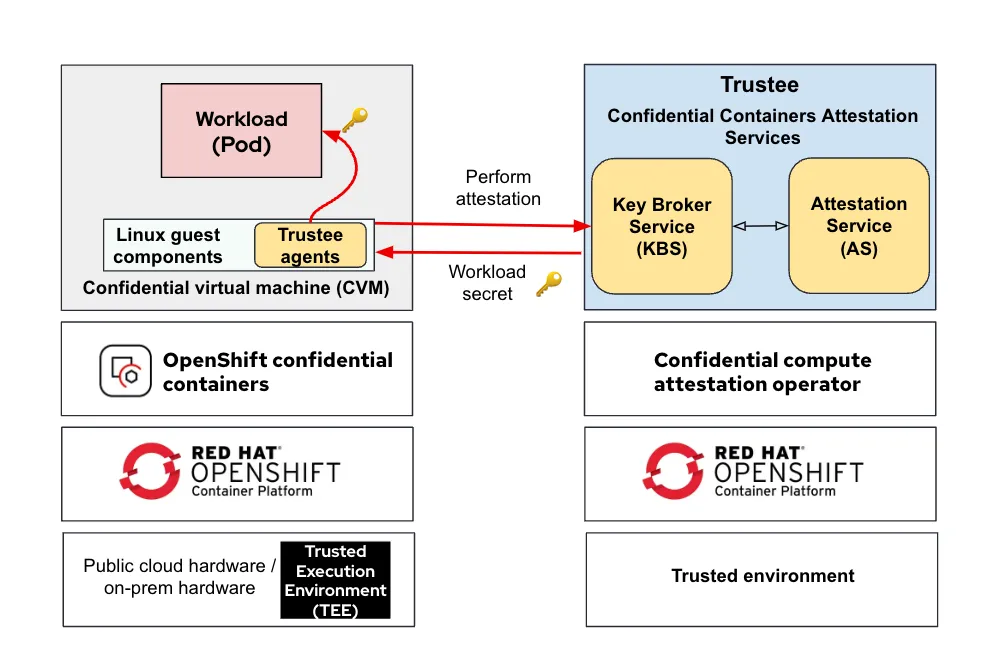

Confidential computing is a technology for securing data in use. It uses a Trusted Execution Environment (TEE) provided within the hardware of the processor to prevent access from others who have access to the system, including cluster administrators and hypervisor operators. Confidential containers is a project to standardize the consumption of confidential computing by making the security boundary for confidential computing a Kubernetes pod. Kata containers is used to establish the boundary via a shim VM.

A core goal of confidential computing is to use this technology to isolate the workload from both Kubernetes and hypervisor administrators. In practice this means that even a kubeadmin user cannot exec into a running confidential container or inspect its memory.

This pattern deploys and configures Red Hat OpenShift Sandboxed Containers for confidential computing workloads on both cloud (Microsoft Azure) and bare metal infrastructure.

Cloud deployments use "peer pods" — confidential VMs provisioned directly on the Azure hypervisor rather than nested inside OpenShift worker nodes. Azure offers multiple confidential VM families; this pattern defaults to the Standard_DCas_v5 family but can be configured to use other families via values-global.yaml.

Bare metal deployments support Intel TDX (Trusted Domain Extensions) and AMD SEV-SNP (Secure Encrypted Virtualization - Secure Nested Paging) hardware TEEs, with optional Technology Preview NVIDIA confidential GPU support (H100, H200, B100, B200) for protected GPU workloads.

The pattern includes sample applications demonstrating security boundaries and secret delivery: three variants of 'Hello OpenShift' and a kbs-access web service for verifying end-to-end attestation and secret retrieval from the Key Broker Service.

Deployment topologies

The pattern supports four deployment topologies, selected by setting main.clusterGroupName in values-global.yaml:

simple— Single-cluster Azure deployment with all components (Trustee, Vault, ACM, sandboxed containers, workloads) on one clustertrusted-hub+spoke— Multi-cluster Azure deployment separating the trusted zone (hub with Trustee/Vault/ACM) from the untrusted workload zone (spoke)baremetal— Single-cluster bare metal with Intel TDX or AMD SEV-SNP supportbaremetal-gpu— Technology Preview: Bare metal with Intel TDX or AMD SEV-SNP and NVIDIA confidential GPU support (H100, H200, B100, B200)

Requirements

Azure deployments

An Azure account with the required access rights, including quota for Azure confidential VM families (default:

Standard_DCas_v5)An OpenShift 4.19.28+ cluster within the Azure environment

Azure DNS hosting the cluster’s DNS zone

Tools:

podman,yq,jq,skopeoAn OpenShift pull secret at

~/pull-secret.json

Bare metal deployments

OpenShift 4.19.28+ cluster on bare metal with Intel TDX or AMD SEV-SNP hardware

BIOS/firmware configured to enable TDX or SEV-SNP

HostPath Provisioner (HPP) for persistent storage

For Intel TDX: an Intel PCS API key from Intel Trusted Services

For GPU topology (Technology Preview): NVIDIA GPUs with confidential computing firmware (H100, H200, B100, B200)

Tools:

podman,yq,jq,skopeoAn OpenShift pull secret at

~/pull-secret.json

Security considerations

This pattern is a demonstration only and contains configurations that are not best practice

The pattern supports both single-cluster (

simpleclusterGroup) and multi-cluster (trusted-hub+spoke) topologies. The default is single-cluster, which breaks the RACI separation expected in a remote attestation architecture. In the single-cluster topology, the Key Broker Service and the workloads it protects run on the same cluster, meaning a compromised cluster could affect both. The multi-cluster topology addresses this by separating the trusted zone (Trustee, Vault, ACM on the hub) from the untrusted workload zone (spoke). The RATS architecture mandates that the Key Broker Service (e.g. Trustee) is in a trusted security zone.The Attestation Service ships with permissive default policies that accept all container images without verification. This allows quick testing but is unsuitable for production. The threat model assumes that without image signature verification, an attacker with access to the container registry could substitute malicious images that would still receive secrets from the KBS.

Hardening attestation policies for production

For production deployments, configure strict attestation policies:

Enable the

signedpolicy: Edit~/values-secret-coco-pattern.yamland change the attestation policy frominsecuretosigned:kbs: attestation: policy: signed # Changed from 'insecure'Generate and register cosign public keys: Container images must be signed with cosign. Generate a key pair and add the public key to the attestation service configuration:

# Generate cosign key pair cosign generate-key-pair # Add the public key content to values-secret-coco-pattern.yaml kbs: cosignPublicKeys: - | -----BEGIN PUBLIC KEY----- <your cosign public key content> -----END PUBLIC KEY-----Sign your container images: Before deployment, sign all confidential container images:

# Sign the image cosign sign --key cosign.key your-registry.io/your-image:tag # Verify the signature cosign verify --key cosign.pub your-registry.io/your-image:tagConfigure reference values for PCR measurements: For hardware-backed attestation, configure expected PCR values in the policy. These are automatically retrieved by

scripts/get-pcr.shbut should be reviewed and locked down in production. See Updating PCR measurements for the workflow when peer-pod images change.

Without these hardening steps, the attestation service will approve any workload requesting secrets, defeating the confidentiality guarantees of the TEE.

Future work

Deploying to IBM Cloud and IBM Z confidential computing environments

Supporting air-gapped deployments

Enhanced AI/ML workload examples demonstrating confidential inference at scale

Architecture

Confidential Containers architecture separates two security zones:

Trusted zone: Runs the Key Broker Service (Trustee), attestation service, and secrets management (Vault). This zone verifies TEE evidence and releases secrets only to authenticated confidential workloads.

Untrusted zone: Runs the sandboxed containers operator, confidential workload pods, and the Kyverno policy engine. Workloads in this zone must attest to Trustee before receiving secrets.

The pattern supports both single-cluster and multi-cluster topologies. In single-cluster topologies (simple, baremetal, baremetal-gpu), all components run on one cluster. In the multi-cluster topology, the trusted-hub clusterGroup runs on the hub cluster and the spoke clusterGroup runs on managed clusters imported via ACM.

Kyverno’s role: The pattern uses Kyverno to dynamically inject attestation agent configuration (cc_init_data) into confidential pods at admission time. An imperative job generates ConfigMaps containing the KBS TLS certificate and policy files. Kyverno propagates these ConfigMaps to workload namespaces and injects them as pod annotations, ensuring pods have the correct configuration for attestation without manual annotation management.

Key components

Red Hat Build of Trustee 1.1: The Key Broker Service (KBS) and attestation service. Trustee verifies that workloads are running in a genuine TEE before releasing secrets. Certificates for Trustee are managed by cert-manager using self-signed CAs.

HashiCorp Vault: Secrets backend for the Validated Patterns framework. Stores KBS keys, attestation policies, and PCR measurements.

OpenShift Sandboxed Containers 1.12: Deploys and manages confidential container infrastructure. On Azure, provisions peer-pod VMs; on bare metal, configures Kata runtimes for TDX/SEV-SNP. Operator subscriptions are pinned to specific CSV versions with manual install plan approval to ensure version consistency.

Kyverno: Policy engine that dynamically injects

cc_init_dataannotations into confidential pods. Manages the distribution of attestation agent configuration (KBS TLS certificates, policy files) from centralized ConfigMaps to workload namespaces.Red Hat Advanced Cluster Management (ACM): Manages the spoke cluster in multi-cluster deployments. Policies and applications are deployed to the spoke via ACM’s application lifecycle management.

Node Feature Discovery (NFD) (bare metal only): Detects Intel TDX and AMD SEV-SNP hardware capabilities and labels nodes accordingly for runtime class scheduling.

Intel DCAP (bare metal with Intel TDX): Provisioning Certificate Caching Service (PCCS) and Quote Generation Service (QGS) for Intel TDX remote attestation via the Intel PCS API.

NVIDIA GPU Operator (GPU topology only, Technology Preview): Manages NVIDIA confidential GPUs (H100, H200, B100, B200) with CC Manager, VFIO passthrough, and Kata device plugins for GPU-enabled confidential workloads.

Intel TDX support

Intel Trusted Domain Extensions (TDX) is a hardware-based TEE technology that isolates virtual machines from the hypervisor and other VMs using CPU-enforced memory encryption and integrity protection. The pattern provides full Intel TDX support on bare metal deployments.

Key features:

Automatic hardware detection: Node Feature Discovery (NFD) detects TDX-capable CPUs and labels nodes with

intel.feature.node.kubernetes.io/tdx=trueRemote attestation: Intel DCAP components (PCCS and QGS) enable quote generation and verification via the Intel PCS API

Transparent runtime selection: The

kata-ccRuntimeClass automatically uses the TDX handler (kata-tdx) on labeled nodesMachineConfig automation: Kernel parameters (

kvm_intel.tdx=1) and vsock modules are applied automatically

Deployment requirements:

Intel Xeon processors with TDX support (4th Gen Sapphire Rapids or newer)

BIOS/firmware with TDX enabled

Intel PCS API key (obtainable from Intel Trusted Services)

The pattern’s Intel DCAP chart deploys PCCS as a centralized caching service and QGS as a DaemonSet on TDX nodes. Quote generation happens within the TEE, with PCCS providing attestation collateral to Trustee for verification.

AMD SEV-SNP support

AMD Secure Encrypted Virtualization - Secure Nested Paging (SEV-SNP) is a hardware-based TEE technology that provides VM isolation through memory encryption and integrity protection. SEV-SNP extends AMD’s SEV technology with secure nested paging to protect against additional attack vectors. The pattern provides full AMD SEV-SNP support on bare metal deployments.

Key features:

Automatic hardware detection: Node Feature Discovery (NFD) detects SEV-SNP-capable processors and labels nodes with

amd.feature.node.kubernetes.io/snp=trueCertificate chain-based attestation: AMD SEV-SNP uses a certificate chain model for attestation verification, eliminating the need for a collateral caching service like Intel’s PCCS

Transparent runtime selection: The

kata-ccRuntimeClass automatically uses the SEV-SNP handler (kata-snp) on labeled nodesMachineConfig automation: Kernel parameters for SEV-SNP enablement and vsock modules are applied automatically

Deployment requirements:

AMD EPYC processors with SEV-SNP support (3rd Gen Milan or newer)

BIOS/firmware with SEV-SNP enabled

No external attestation service required (certificate chain-based model)

AMD SEV-SNP’s certificate chain approach simplifies the attestation infrastructure compared to Intel TDX, as the full certificate chain is embedded in the attestation evidence sent to Trustee for verification.

NVIDIA confidential GPU support (Technology Preview)

NVIDIA confidential GPUs with confidential computing firmware enable GPU-accelerated workloads to run inside TEEs with hardware-enforced memory encryption and attestation. The pattern’s baremetal-gpu topology provides support for NVIDIA confidential GPUs (H100, H200, B100, B200) on bare metal with either Intel TDX or AMD SEV-SNP as the host TEE platform.

Key features:

GPU passthrough via VFIO: GPUs are passed through to Kata confidential VMs using IOMMU and VFIO, providing native GPU performance

Confidential Computing Manager: NVIDIA CC Manager enforces confidential mode at the GPU firmware level

GPU attestation: The GPU’s attestation evidence is included in the TEE’s attestation report to Trustee

Kata device plugin: The NVIDIA Kata sandbox device plugin exposes GPUs as schedulable resources (

nvidia.com/pgpu)Multi-platform support: Works with both Intel TDX and AMD SEV-SNP host TEE platforms

Deployment requirements:

NVIDIA GPUs with confidential computing firmware (H100, H200, B100, B200)

Intel TDX or AMD SEV-SNP enabled bare metal host

IOMMU-capable system (kernel parameters applied via MachineConfig:

intel_iommu=onoramd_iommu=on)NVIDIA GPU Operator v26.3.0+

The pattern includes a sample CUDA workload (gpu-vectoradd) that demonstrates GPU-accelerated computation within a confidential container, verifying both GPU functionality and attestation integration. Testing has been performed with Intel TDX + H100; AMD SEV-SNP + GPU configurations are expected to work but have not been fully validated.

References

OpenShift Sandboxed Containers and Trustee:

Kyverno:

Intel TDX:

AMD SEV-SNP:

NVIDIA Confidential Computing:

Related patterns:

Intel SGX protected Vault for Multicloud GitOps — Uses Intel SGX enclaves (Gramine) for application-level confidential computing, complementary to CoCo’s VM-based TEE approach

Layered Zero Trust — Demonstrates workload identity (SPIFFE/SPIRE), secrets management (Vault/ESO), and zero-trust principles that complement CoCo’s TEE isolation